What Are Micro Frontends

Microservices have taken back-end development by storm. Micro frontends borrow from this approach to organize complex application development. With micro-frontend architecture, a web app is segmented into distinct sections, each handled by independent teams.

Micro frontends address the issue of non-scalable front-end monoliths, possibly maintained by dozens of dev teams concurrently. In such situations, one may find that further enhancements as well as the maintenance of the product becomes close to impossible due to centralized complexity.

On top of that, a task as basic as changing a label in the application may take hours due to a slow build process, not to mention some tools might start losing stability when stress-tested with potentially thousands of JavaScript files.

The idea is nothing new conceptually, as it is based on the divide-and-conquer approach. The very definition of this approach assumes that a team owns a particular part of the application end-to-end, from the database layer, through back-end services to the front-end.

Another important assumption is that the team is empowered to choose any frameworks at their will – as suited by their skillsets as well as business requirements of the piece of application they own. They should be able to release their work independently, without coordination as well as work in isolation without stepping on each other’s toes.

This article will be covering an approach we took here at CCBill for our chunky admin systems. It has so far helped us a lot to contain the amount of legacy JavaScript we use in our projects as well as allow for incremental upgrades of codebases, faster continues-integration (CI) builds and simplified project structures.

Micro Frontends Use Case - Trading App

This article will be going through the concept and approach we took based on an example trading application. In the following sections, we shall cover a number of approaches necessary in achieving such a split, with a focus on practical examples. At CCBill, we took the path of front-end composition where the submodules are fetched dynamically by the client utilizing code splitting and lazy loading. The alternative being a back-end composer implemented at the services layer.

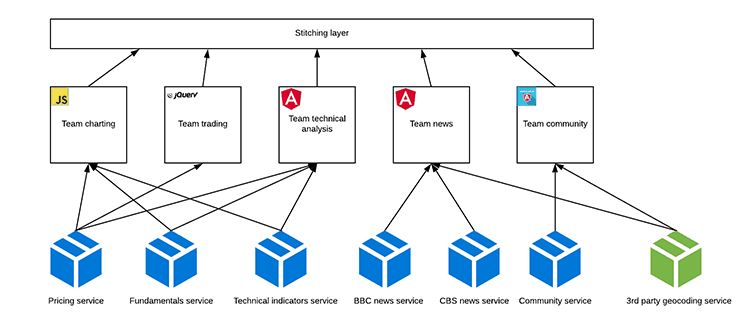

Figure 1: Example trading application dashboard view mock

The image above depicts the example trading web application’s multi-fragment view, composed of multiple micro frontends. Furthermore, some actions in each of those micro frontends may lead to a separate view that is handled entirely by one of the modules, for instance details of an idea or piece of news.

- Team charting (pure HTML and JavaScript)

- Team technical analysis (Angular)

- Team news (Angular)

- Team trades execution (jQuery)

- Team community (Angular.js)

Dependency Management

Since one of the core assumptions is that the teams are empowered to have a front-end stack of their choice, we have to mitigate the most obvious drawback of it – reduce the amount of duplicated scripts shipped to the browser.

When we look at our example dashboard, we would possibly load the full Angular stack twice, and this would clearly have a negative impact on load time and thus user experience of our product. We will go through some techniques one could implement to address this issue.

De-Duplication

One option to reduce redundancy between micro frontends is to leverage a concept referred to as externals in webpack. With externals set up, when the bundle is assembled, instead of placing the library code together with it, we refer a global variable of our choice.

Here is an example of a jQuery external configuration:

…

/*

* Libraries provided outside of webpack:

* require('jquery') will result in referencing window.jQuery

*/

externals: {

jquery: 'jQuery'

},

…

Snippet 1: A way to define an external in webpack

This will result in webpack using the global variable when resolving jQuery imports.

In order to make this setup fully functional we have to deliver jQuery to the application in some way, for instance via an HTML script tag. Thus, the jQuery library will be loaded just once, despite the fact that each micro frontend is referencing it on its own. Another benefit is library version consistency across micro frontends.

When working with externals it is a good idea to include the externalized library as a dev dependency in your package.json. That way, you may run the front end in separation from the rest of the application as well as perform common tasks like unit tests execution.

Bundling And Code Splitting

If your application is not leveraging HTTP2 yet, it might be a good idea to bundle common third-party dependencies together to limit the number of requests fetching resources.

Please bear in mind that this technique even though limiting the amount of network requests, has a negative effect on caching. If you bundle your third parties together instead of referencing them one by one from a commonly used CDN, you force your users to download the common bundle at least once per newly released version comparing to a high chance their browser had the third-party library already cached from the CDN. The situation gets much worse without content-based cache-breaking or manual versioning of the bundle, especially in combination with frequent releases.

Together with webpack externals it is easy to accomplish de-duplicated third-party libraries. In cases where bundling is implemented using UMD distros, one can amend the production bundling process (using gulp, grunt or other tools).

One of the major drawbacks of externalizing third-party dependencies is the potential need to sync dependency versions between the micro frontends. Although beneficial to the end product, this may pose challenges to the development teams, where some sort of synchronized updates need to be performed. Gradual updates are an option though, where two versions coexist as the teams take the chance to upgrade their dependency at their convenience. Both versions of the third-party library may be simultaneously deployed and served, using a bundle name or path as a version discriminator.

…

<script src="/stitching-layer/angular.bundle.8.0.0.js">; </script>

…

<script src="/stitching-layer/angular.bundle.content-hash1.js"> </script>

…

Snippet 2: Example naming convention for the common bundle

There are alternative solutions for achieving singular loading of libraries per browser window, such as zone.js used by Angular. As an alternative to hosting such dependencies in the stitching layer, with latest support for dynamic imports in the build tools, we can dynamically lazy load them on need and when not already provided.

Isolating Version Clashes

There are at least three viable approaches to address dependency version clashes between micro frontends.

First and possibly the most demanding approach is not allowing such situations to occur. This is easier said than done, as it might be costly to upgrade. At the same time, the old version of an example framework might be already deprecated, making using it for any further development of new modules pointless. In a situation where migration of the existing codebase or staying on the old version is not an option, we can take one of the following possible paths to make them coexist.

Let us briefly mention the dreaded iframes as a means to create an isolated JavaScript execution scope. There are many drawbacks to composing your application using this approach. We have been there, and moving away from an iframes based architecture simplified one of our admin portals a lot.

Issues related to resizing and modals handling, navigation and page reload handing, and communication between iframes were resolved by moving away from iframes. Nevertheless, if it is the only viable option available, it will still work. However, iframes require a fair deal of additional work.

The last option we are going to go through is closures utilization. Bundlers like webpack use closures to build up bundles. The library has to be implemented in such a way as to not pollute and utilize the global window object, offering a more maintainable way of exposing its APIs.

UMD bundles for instance perform feature detection to determine in what environment they are run to use the most adequate way of exposing its APIs.

Communication Between Apps

Most frameworks provide a way of communication between the application parts – broadcast/emit in angularjs, inputs/outputs/shared services in Angular as well as custom events in jQuery. What we need is a framework agnostic way of communication between the application parts.

One such option is CustomEvent. It has a good browser support with polyfill available for older browsers. It ensures loose coupling between apps following the pub-sub pattern and even leverage event delegation.

document.addEventListener("my:trader:buy", () => {console.log("Bought!");});

document.dispatchEvent(new CustomEvent("my:trader:buy"));

Snippet 3: Pub-sub done using Custom Events

Alternatively, if we wish to use a more visible way of communication using global services – this is also achievable. In this case, we would need to utilize a global window scope to share those, written as plain classes. In order to limit the pollution of the global scope, it is encouraged to declare them in namespaces.

Let’s have a look at how this definition could look like.

window.SPA = window.SPA || {};

window.SPA.services = window.SPA.services || {};

class TradingSessionService {

/**

* The constructor of TradingSessionService class

* @constructor

*/

constructor() {

}

…

}

SPA.services.tradingSessionService = new TradingSessionService();

(function() {

"use strict";

/**

* The constructor of AuthService class

* @constructor

*/

function AuthService() {

}

…

SPA.services.authService = new AuthService();

}());

Snippet 4: Two ways of implementing global, shared services

The above snippet presents a way to define and expose globally a service utilizing modern as well as legacy syntax of class definition. In order to prevent global scope pollution, we place them inside the SPA.services namespace.

Sharing Code

The code can be easily shared via libraries published to an npm registry, thus allowing them to be pulled in by the micro frontends as any other dependency.

An important consideration is that we are potentially working in an environment where multiple different frameworks coexist. That forces us to write the common parts in a way that is agnostic and thus is reusable across them – meaning vanilla JavaScript and platform APIs are our safest bet.

In our trading application, we could identify that we are re-implementing data validation rules in each of our micro frontends. It is a good candidate for extraction to a shared library, as it encapsulates a common concern.

Implementing the set of validators as plain JavaScript functions would work perfectly since we will be able to leverage it across all frameworks used.

Sharing State

Although there are multiple ways of achieving a shared state, ideally one should facade it with a globally available service for ease of access and centralization of logic to access said state.

If the state tends to mutate over time, for example via an open websocket connection, it would be ideal to use some sort of notification system to notify interested parties of such events. A natural fit would be a pub-sub model, which can be achieved for instance using CustomEvents, or even a state management pattern like redux, since it is framework agnostic.

Sharing Layer

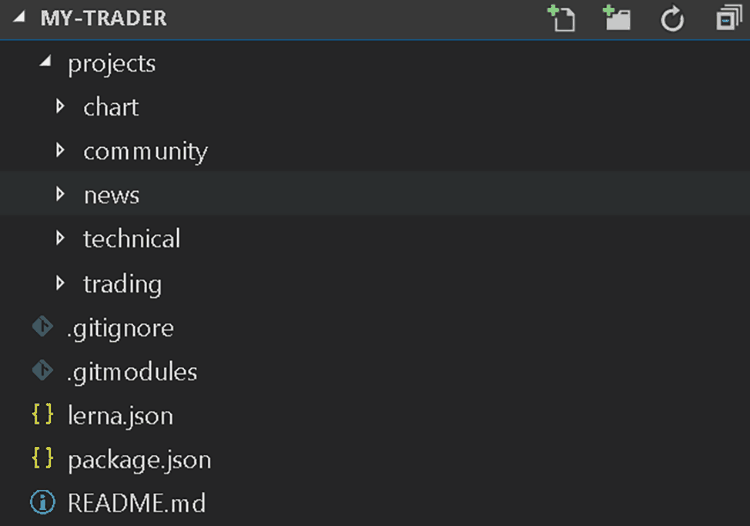

Where should all the common live? Common services, state, configs; they clearly cannot belong to any of the individual micro frontends. At this point, we have to introduce an overarching entity – the stitching layer.

The stitching layer should be as thin as possible, containing only the essentials needed for all the other parts of the application to work correctly. It also decouples all the individual parts, as it can act as a mediator between them.

We can assume each micro frontend should only know about its own existence and what is exposed by the stitching layer.

Figure 2: Cross component communication and split, including stitching layer

As mentioned already, one can put common parts of the application in the stitching layer, like state and services (for example, AuthService and TradingSessionService from our sample code) as well as make it responsible for root level routing, loading up the modules of the application. It can be seen as well as a place to compose all the parts together and facilitate any interactions between the parts.

In our case, since we are opting for front-end composition, the stitching layer would need to know which micro frontends to load up in a given view. This can be achieved using a framework like single-spa or a custom solution. For instance, for /news context-path it would load up News micro frontend.

Navigation / Routing

Every MV* framework provides a complimentary routing mechanism, using browser history API, necessary fallbacks when required, and even handling server-side routing.

The question is which of the micro frontends should be responsible for handling routing?

Dividing this responsibility between the micro frontends and the stitching layer is one possible approach. The stitching layer would be responsible for the highest-level routing (/news), determining which top-level view to load. Furthermore, fine-grained routing would then be handled by the micro frontend itself (/news/:id). In this case, the news micro frontend will load an item pointed in the sub path /:id.

Styling And UX

Consistency may become an issue when working in a micro frontends setup. One can easily fall in a trap where different parts of the application have a different look and feel, especially if worked on by independent teams. This can result in a confusing user experience and an untidy feel to the application.

To address this issue, common UI/UX guidelines have to be set up and shared with the teams. This knowledge base needs to be propagated together with the tools and libraries that will help accomplish the consistency, that being a common widgets set or styling provider (i.e. bootstrap, material or an in house solution). Again, the framework agnostic part plays a huge role here, as it should be easy for each team to use the mentioned widgets.

When it comes to styling the Web Components approach is particularity important thanks to CSS sandboxing using Shadow DOM. Without it, any enterprise level setup containing multiple teams working on a single product risk falling into CSS rules collisions. To prevent such issues, set up naming conventions per team, to limit or even remove the chance of a clash or a rule leaking out of a micro frontend. This can be easily achieved using any of the available CSS pre-processors (i.e. Less or Sass).

In our case, the team working on the chart used the chart prefix and the team working on the news, the news prefix.

.chart {

&--candle {

...

}

&--updating {

...

}

}Snippet 5: Prefixing CSS selectors done in Sass by the charting team

Working In Isolation

One major benefit of micro frontends is ownership of the code and the fact that a given team can work on and release a part of the application in isolation without affecting other teams’ work.

The team should be able to start up the micro frontend they are working on, not depending on other parts of the application. Testing it out together with the stitching layer or other micro frontends is an important concern as well. This will allow for early identification of any integration issues.

Note: The quality of daily work improves a lot too, when you can bring up the application quickly and easily.

There is a range of tools to choose from to deliver the mentioned functionality. We will focus on two options:

- webpack-dev-server

- browsersync.

Ultimately, most lightweight HTTP server with proxy capabilities will do the job.

Running As A Standalone Service

Our objective is to be able to start any micro frontend independently from the rest of the application.

Depending on the characteristics of the frontend you are building, you might need to stub or proxy communication with the back-end and provide any contextual data and resources that are required. For example, a logged in user context or a third-party library that is usually provided by the stitching layer).

Assuming webpack is the bundler of your choice, using webpack-dev-server to serve your web app is straightforward. It automatically provides incremental builds for you as changes are made in any of the files being watched. In fact, this is also what Angular CLI leverages under the hood to achieve the same.

Also, use a separate index.html file to serve any context provided libraries and/or data stubbing. Whilst writing up the configuration, it is helpful to split it up into several files, containing common parts and dev, prod and test setups.

const webpackMerge = require("webpack-merge");

const commonConfig = require("./webpack.common.js");

module.exports = webpackMerge(commonConfig, {

devServer: {

index: "./index.html"

// your devserver config goes here

}

});

Snippet 6: webpack-dev-server configuration on top of common config

As mentioned before, we are including the common part of the webpack configuration and merging it with a custom setup written to run the dev server. We are specifying the index.html file location, which will be used as the entry-point for our micro frontend. There are a few additions in it compared to how it is exposed for consumption by the stitching layer. We will go through them next.

<!doctype html>

<html lang="en">

<head>

<link href="https://fonts.googleapis.com/icon?family=Material+Icons" rel="stylesheet">

<script src="https://cdnjs.cloudflare.com/ajax/libs/jquery/3.4.1/jquery.min.js"></script>

</head>

<body>

<script>

var tradingSesion = {

// some data stubbed here

};

</script>

<app-news></app-news>

</body>

</html>

Snippet 7: HTML index page including resources and data normally provided by the stitching layer

There are two additions in this index.html file, i.e. we are providing contextual data inside the script tag as well as dependencies, normally provided by the stitching layer. In our example trading app, we went for material design and so we have to supply the necessary CSS, as well as the jQuery library.

Integration With The Rest Of The App

It would be highly convenient if we could see how the changes applied to a micro frontend play together with the rest of the application.

Our proposed solution will utilize browsersync. It has many capabilities, one of the most notable being the ability to replay actions done in one browser in other connected browsers. Our use-case for it today is its ability to serve as a proxy, with a very simple configuration to proxy any page, serving parts of it directly from a local directory.

var bs = require("browser-sync").create();

bs.init({

proxy: "https://qa.my-trading.com",

serveStatic: [{

route: "/community",

dir: "./projects/community"

}, {

route: "/news",

dir: "./projects/news"

}]

});

Snippet 8: Browsersync config proxying trading application deployed on a staging environment

We are now proxying all requests from staging.my-trading.com, but serving the static assets for /community and /news contexts from /projects/community and /projects/news local directories.

Monorepos, Lerna And Git Submodules

Organizing your project structure is as important a concern as how the codebase is split up. Monorepositories come in handy when working in such a setup.

In our proposed setup, the stitching layer would live at the root of the monorepository. That gives it a good visibility into its components as well as acting as a hub point to handle any cross-frontends tasks, like for instance, definition of the browsersync proxy for integration testing.

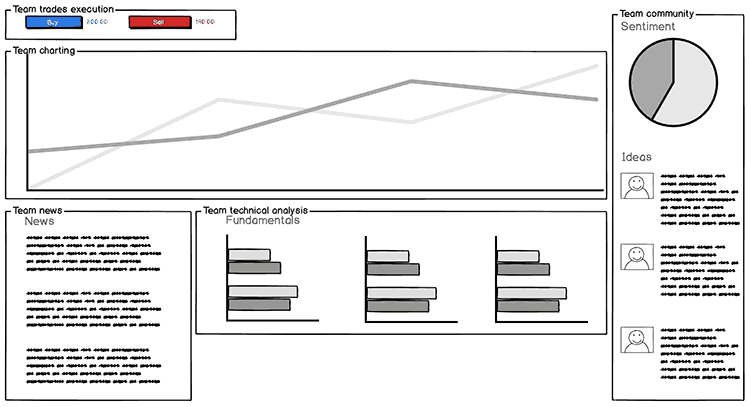

Image 3: Proposal monorepository structure

As depicted above, we define a projects directory that contains the micro frontends the application consists of. This is where git submodules come in. Utilizing them, we are able to host each micro frontend’s repository separately, whilst providing an option to have all of them in an aggregated setup. Commits history, branches and tags, CI build jobs, all are still separated and local to each micro frontend.

Lerna is quite an obvious option here when it comes to monoreposities handling. It will enable us to execute any npm script in each micro frontend if need be. For instance, if we would want to build the entire application or unit test multiple micro frontends after a change made in a shared library.

Setting up common standards between the projects, such as a naming convention for the scripts, is what makes this setup possible. Having common standards also allows resources to switch between projects more easily. For example, build:ci command serves exactly the same purpose in each micro frontend in this case and its functionality does not need to be looked up.

lerna run build:ci --loglevel info --scope '{news,chart}'

Snippet 9: Executing build:ci command in two submodules via lerna

The result of the above command will be lerna executing test command in news and chart, outputting the logs from each of submodules.

HTTP2 Impact

Traditional bundling becomes problematic when working as per the proposed setup. Bundling for reusability and avoidance of duplication becomes less straightforward.

Some of the mentioned techniques can help – common libraries hosted in the stitching layer, bundled separately. This approach is often referred to as code-splitting and is a middle ground between bundling your application all together into one file and loading each individual file separately. Bundling makes caching less efficient as a change in one of the files in the bundle forces the user to download the entire bundle all over again.

Luckily, thanks to HTTP2 this problem goes away entirely as bundling is far less important. The browser is able to effectively handle a large number of concurrent requests as well as utilize another technique called server push to limit the amount of roundtrips to and from the server. If made available, HTTP2 makes working in a micro frontends setup simpler and the cost of simply including each dependency in the micro frontend negligible thanks to browser caching.

Comparison With Back-End Composition

Another common approach to micro frontends is what is termed back-end based composition, or fragments serving.

In this section, we will highlight the pros and cons of front-end based composition over back-end based composition.

Cons

- Thicker stitching layer, which could be seen as a micro frontend of its own.

- More code and logic pushed to the client.

- Poor SEO, as page parts are requested dynamically, crawlers have limited capability of indexing such pages. Lately Google recommended dynamic rendering approach to mitigate this problem temporarily.

- More roundtrips and HTTP overhead when fetching composites for complex views.

Pros

- Faster initial paint/load, better UX.

- Progressive rendering of the pages, no need to wait for slowest response until HTML is sent to the browser.

- Decoupling from back-end, easy to run as a standalone using one HTTP server.

- Option to easily proxy API requests for part of the app.

- Separated CI jobs, quicker builds, easier maintenance, smaller separate codebases.

- No need to bring up back-end stack, front-end can be worked on independently.

- Ready open source solutions for handling compositions, like single-spa.

It is clear that there are lot of benefits to front-end composition, but also significant drawbacks (such as poor SEO), which may not be acceptable for all use cases.

Conclusion

Micro frontends offer an appealing alternative to the monolithic approach of building user interfaces. They allow for greater flexibility not only in utilization of in-browser frameworks but also build time setups and repository structures.

It is important to remember this approach is not always of great benefit, especially for small to medium sized applications. However, the closer one gets to enterprise grade size front-ends, the more crucial it becomes to divide it up into smaller chunks. These modules can then evolve independently and have well defined ownership, with a dedicated team maintaining them.

This pattern too, as is the case for micro services, poses some challenges and complications. Many aspects, such as initial setup, communication between applications, redundancy and reuse are more difficult to handle. Therefore, the cost of added complexity must be included when determining your return on investment based on your particular use case.

Going for front-end composition is clearly not the way to go if SEO is a big concern for you. It is more suited for chunky admin-like systems. However, it also offers many advantages such as better decoupling, lower maintenance costs, separation of CI jobs, and the capability to load the front-end in isolation of other layers and systems.

Ultimately, what we have offered here today is another valid tool for your front-end development toolbox. One that has merit and is effective under the correct circumstances.